Apple reveals a simple trick that makes AI 8% smarter

Scientists at Apple have presented a study showing that large language models (LLMs) can significantly improve task accuracy when using an old and proven tool – checklists.

Context: how language models are typically trained

After training, the LLM undergoes a fine-tuning phase using the Reinforcement Learning from Human Feedback (RLHF) method. At this stage, human reviewers rate the model’s responses: a “like” increases the likelihood of a similar response in the future, while a “dislike” decreases it. This approach helps to make the responses more useful and safer.

The RLHF has weaknesses, however. The model can learn to produce “seemingly correct” answers that don’t actually solve the problem, just give the illusion of correctness.

The model can learn to give “seemingly correct” answers that don’t actually solve the problem.

What Apple researchers suggested

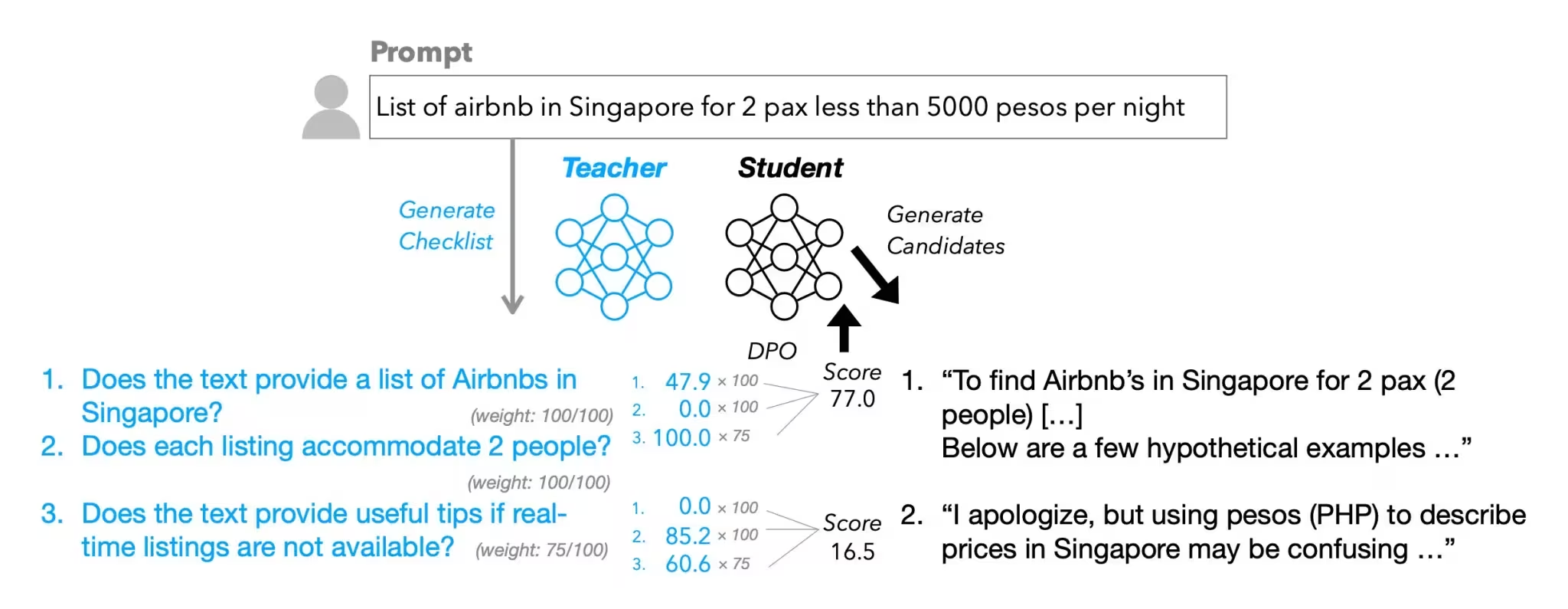

In an article “Checklists Are Better Than Reward Models For Aligning Language Models” the company proposed a new method – Reinforcement Learning from Checklist Feedback (RLCF).

- Instead of a general “like/dislike” rating, model responses are checked against a list of specific items (“Is it translated into Spanish?”, “Is there formatting?”, etc.).

- Each item is rated on a scale of 0 to 100.

- The more powerful model (“teacher”) checks the answers and assigns scores that become a signal for pre-training of the basic (“student”) model.

Apple has even created a dataset WildChecklists with 130,000 instructions and automatically generated checklists.

Results of the study

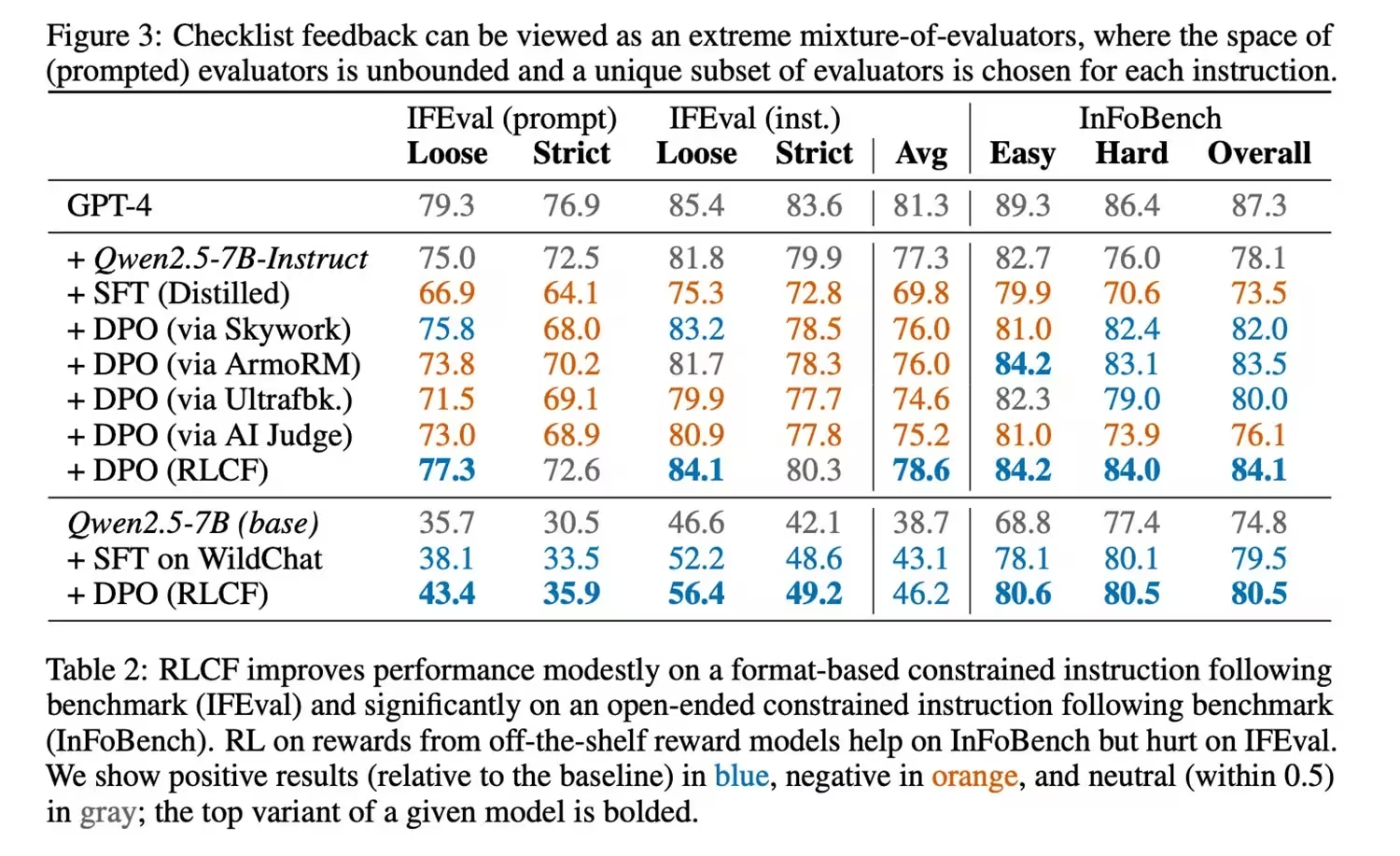

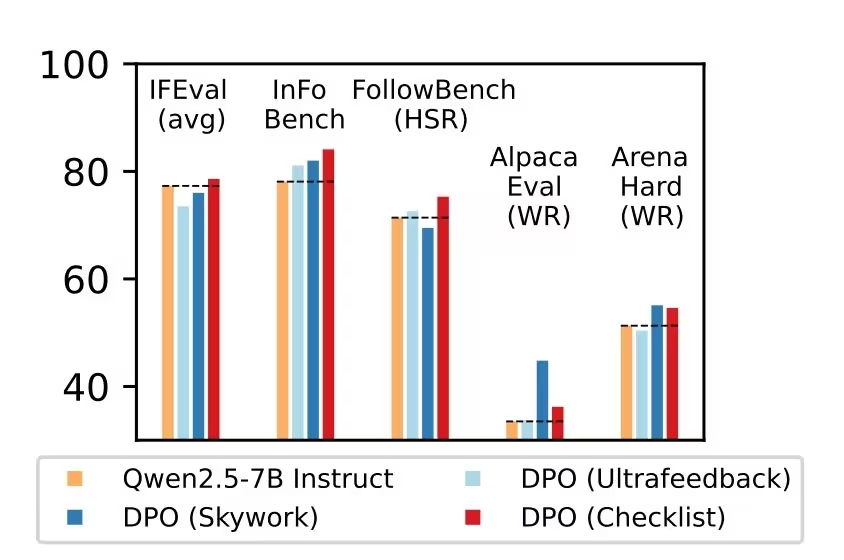

Using the RLCF method, the researchers tested the Qwen2.5-7B-Instruct model on five popular benchmarks. The results showed consistent improvement across all metrics:

- +4 points in FollowBench

- +6 points in InFoBench

- +3 points in Arena-Hard

In individual tests, the improvement reached 8.2%. This makes checklists a more effective method than classical reward models.

Limitations of the approach

- The method focuses on complex multi-step instructions, but may be less useful in other scenarios.

- Validation requires a more powerful model, making the process resource-intensive.

- Important: RLCF improves instruction adherence but does not solve the problem of security of models.

Why it matters

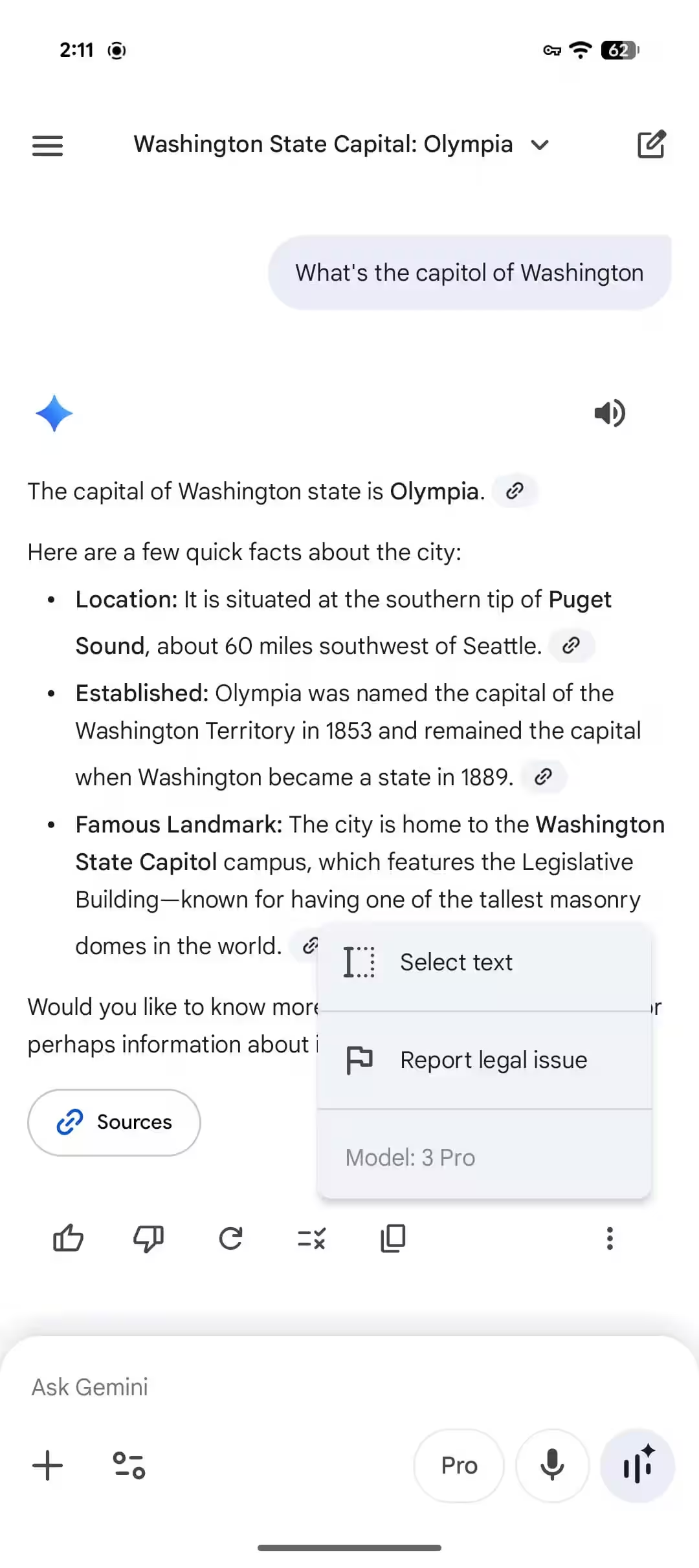

The authors emphasize: with the growing popularity of LLMs as personal assistants, users expect models to rigorously and accurately perform complex, step-by-step tasks. In the future, as such assistants gain more autonomy, accuracy in following instructions will be a key factor in their usefulness.